Brain-Computer Interface Pulse Encoding

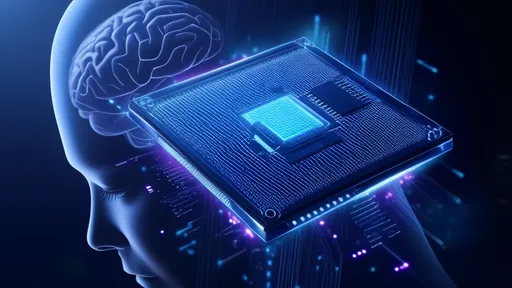

The field of neurotechnology has taken a revolutionary leap forward with the advent of brain-machine interface (BMI) chips capable of interpreting and generating neural pulse codes. These devices, once confined to the realm of science fiction, are now being tested in clinical trials, offering hope for patients with severe motor disabilities and opening new frontiers in human-computer symbiosis. The underlying technology hinges on decoding the brain's intricate pulse patterns—a language of spikes and silences that has puzzled scientists for decades.

At the core of this breakthrough lies the challenge of translating analog neural activity into digital commands. Unlike traditional computing with its binary certainty, biological neurons communicate through stochastic bursts of voltage spikes. Early BMI systems relied on crude population-level firing rates, but next-generation chips now analyze precise temporal sequences. This temporal coding paradigm has proven critical—the exact timing between pulses can carry exponentially more information than simple spike counts. Researchers at Stanford recently demonstrated this by reconstructing handwritten letters from a paralyzed patient's motor cortex signals with 94% accuracy, solely by analyzing microsecond-scale pulse timing variations.

The hardware making this possible represents a marvel of interdisciplinary engineering. Modern neural chips integrate ultra-low-power analog front-ends to capture microvolt-scale signals, coupled with neuromorphic digital processors that implement adaptive decoding algorithms. What sets apart the latest prototypes is their ability to operate in closed-loop—not just reading neural patterns but providing feedback through precisely timed electrical stimulation. This bidirectional capability was showcased when a blind patient perceived rough visual shapes through a retinal implant that translated camera data into pulse trains matching optic nerve patterns.

Pulse coding strategies vary significantly across applications. Motor prosthetics typically employ rate coding schemes where spike frequency correlates with movement velocity. Cognitive interfaces, however, are exploring population coding models where information emerges from coordinated firing across thousands of neurons. The most intriguing developments come from hybrid approaches—like the "phase-amplitude multiplexing" technique developed at MIT, which encodes different data dimensions in a single channel by modulating both spike timing and waveform shape. Such innovations are pushing the boundaries of what information can be extracted from neural tissue.

Ethical considerations loom large as this technology advances. The ability to both read and write neural codes raises profound questions about cognitive liberty and identity. Recent experiments demonstrating memory encoding in rodents through precisely patterned stimulation have sparked debates about potential misuse. Regulatory bodies are scrambling to establish guidelines, while companies developing commercial BMI products face increasing scrutiny over data privacy concerns. The very nature of these devices—creating direct pathways between brains and machines—challenges existing legal frameworks around thought privacy and augmented cognition.

Looking ahead, the convergence of pulse coding technology with artificial intelligence promises transformative applications. Machine learning models trained on vast neural datasets are beginning to predict intended movements before they physically occur. Meanwhile, researchers are exploring how to harness the brain's natural plasticity to help users "learn" to control devices as intuitively as they do their own limbs. The ultimate goal—seamless integration between biological and artificial neural networks—may soon transition from theoretical possibility to clinical reality, rewriting our understanding of human-machine interaction in the process.